Cloud computing brings many benefits to companies. It increases staff productivity by reducing tasks connected to on-premises infrastructure maintenance. It makes it easier to provide secure and highly available services. In addition, a wide range of varied and quickly accessible services encourages companies to experiment more and it allows a faster response to market changes.

Anyhow, the first question customers ask as they begin their cloud journey, is whether it helps to decrease costs. With cloud computing, you obviously don’t need to worry about the costs of infrastructure, licenses, maintenance, etc. anymore. Thus the total cost of ownership of your whole IT is significantly reduced. However, can even more be achieved?

In this article, I would like to share some tips I learned during my work with Pretius’s infrastructure. They may help you optimize your cloud costs easily and change your IT infrastructure mindset to “the cloud way of thinking”.

The article is based on pricing models of two large public cloud providers: Amazon Web Services and Google Cloud Platform. However, other providers use similar concepts in their price lists.

When to optimize costs

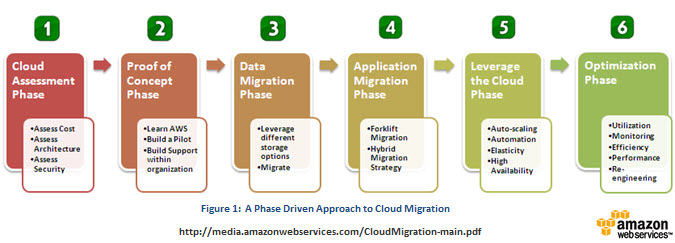

Keep in mind that cost optimization is the last step in the cloud migration process. You have to avoid premature optimization without empirical knowledge about your system behavior in the cloud environment.

Phases of cloud migration process (https://aws.amazon.com/blogs/aws/new-whitepaper-migrating-your-existing-applications-to-the-aws-cloud/)

Measure everything

Before you start thinking about optimization, you should set up complex monitoring of your infrastructure and gather all data about the usage of resources, systems performance, etc. It is very important to verify the impact of your changes. AWS CloudWatch or GCP Stackdriver will be very helpful.

When you optimize costs you should also measure the amount of savings. Don’t focus only on the whole sum per month. Dive deep into reports provided by your cloud platform. You have a chance to develop a more effective feedback loop and to better plan the next steps.

Step 1 – turn off what you aren’t using

Cloud providers charge usually only for used resources: time of CPU and RAM (every minute or second), storage, bandwidth, etc. So the first thing you should do with your unused virtual machine is to turn it off. If you don’t need it anymore, remove it and release any used resources like storage or IP addresses. It seems obvious but many people don’t do it too often.

A less obvious optimization is connected with services used daily. Check if you need them 24/7. Maybe you use some virtual machines for some scheduled processing only 2 hrs a day? If so, turn them on automatically to do the job and then turn them off or even remove them.

The next example is connected with dev and test environments. In most cases, they can be turned off at night or at weekends. You can automate this process using tags and serverless functions. Read more how to do it in Google Cloud.

Step 2 – the right sizing

Regularly check the utilisation of resources in your cloud. Verify average hourly usage of CPU, memory and IOPS. Is it too low? If so, consider downsizing.

Planning on-premises infrastructure forces an inclusion of load growth in the future. Cloud computing enables scaling up of your infrastructure within seconds. So use it and don’t pay for what you aren’t using at the moment.

The next thing to verify is how often you access your data on storage. Consider moving archive-like data to a “colder” class of storage to reduce cost per GB drastically.

Step 3 – autoscaling

It is usually a better idea to use many small machines than a few big ones to scale your infrastructure. If your application is not under constant load and there are usage peaks, consider using autoscaling. Cloud services can scale up fast to handle bigger traffic but also scale down when usage is low, which can save you money.

If your application is stateless and fault-tolerant you can save even more handling part of your traffic using Preemptible/Spot virtual machines. They are short-lived, low-cost VMs offered by cloud providers to utilize the free capacity of their resources. They can be cheaper than regular machines even by up to 90%.

Step 4 – long term discounts

Cloud services are billed in the “pay as you go” model by default. Customers pay only for the used resources: time of CPU and RAM (every minute or second), storage, bandwidth, etc. This approach helps customers experiment easily with new solutions without long-term investments.

However, when we host 24/7 production workloads, we lose profits from on-demand pricing. If you can identify services you want to use long-term, consider switching to a reserved capacity model. Cloud providers offer discounts up to 70% for a 1-year or 3-year commitment for VMs or database services.

You shouldn’t reserve the full capacity needed by the hosted application if the workload changes during the day. Consider using this discount only on instances providing computing power needed most of the time.

Step 5 – ready to use services

Public cloud is full of ready to use services solving common software development problems. Usually, they are billed in pay per use model which can be cheaper than a virtual machine with the installed software.

For example, you can use GCP Stackdriver, AWS CloudWatch, or Middleware to monitor your infrastructure, analyze logs and receive alerts about any anomalies. Many tasks can be done costless or very cheap thanks to serverless functions served by AWS Lambda or GCP Cloud Functions. GCP Identity Platform or Azure Active Directory B2C will help you manage your application users.

Most of those services are free for smaller workloads which makes using them even more profitable.

Step 6 – free tier

Cloud providers offer some services for free. Check if they can meet your needs. For example, you can handle 1 mln API calls per month using AWS Lambda or send 100 e-mails per day for free thanks to SendGrid.

Check the free components offered by the most popular public cloud providers:

- Amazon Web Services: https://aws.amazon.com/free

- Google Cloud Platform: https://cloud.google.com/free

- Microsoft Azure: https://azure.microsoft.com/free/

- Oracle Cloud: https://www.oracle.com/cloud/free/#always-free

Summary

Not every solution presented here may suit your case. Sometimes, the cost of reengineering can be higher than the savings obtained.

In Pretius, implementation of only first two steps described in this article, reduced the cost of our AWS infrastructure by 17%. No development was needed and the cost of time spent on analysis got returned after the first month.

I hope you became interested in the topic of cost optimization in the cloud and this article would help you save money.